McCulloch-Pitts Neurons and Linear Separability

Designing M-P neurons for conditional logic, building binary adders from neural networks, and exploring the limits of linear separability.

First homework for CSE 5526: Introduction to Neural Networks at Ohio State, taught by Prof. DeLiang Wang. This one was all about the McCulloch-Pitts (M-P) neuron model and linear separability.

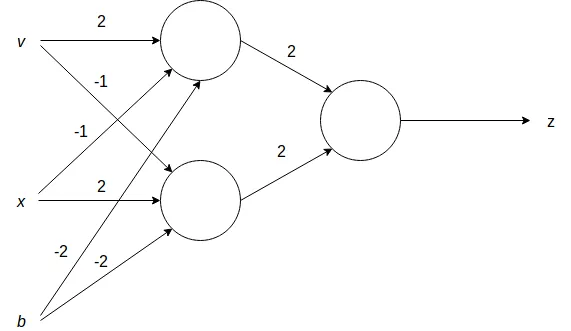

The M-P Neuron Model#

The M-P neuron is about as simple as it gets. It takes binary inputs , computes a weighted sum, and applies a hard threshold:

where if , and if .

Simple as it is, you can implement any Boolean function by wiring enough of these together. The homework had three problems that built up to that idea.

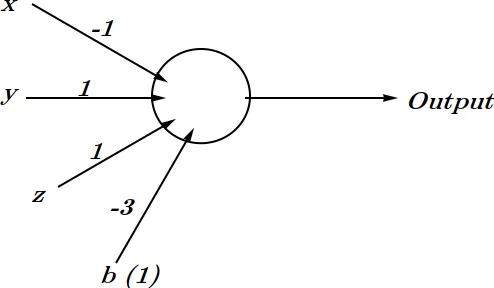

Problem 1: Conditional Output#

Design an M-P neuron with three inputs , , whose output is when and , and otherwise.

If you work through the truth table, the neuron only needs to activate when , , and . Every other combination should give . That gives us:

Why ? Because when , the weight contributes , and and each contribute in the target case. That sums to , which exactly cancels the bias: . Any other combination of inputs can’t get the sum above the threshold.

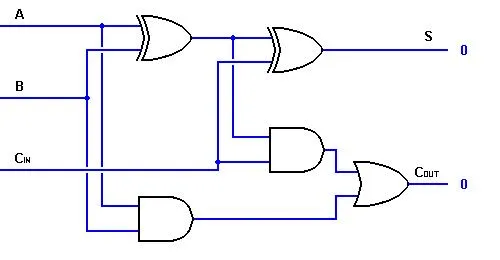

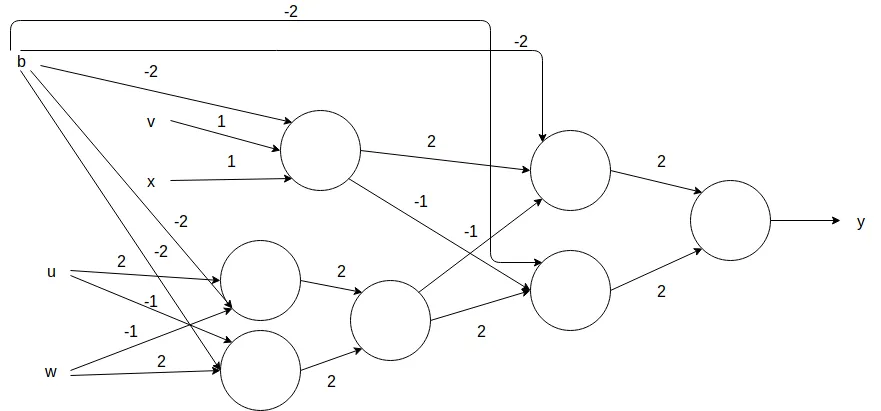

Problem 2: Binary Adder from M-P Neurons#

This one was more involved. The problem asks you to design an M-P network that adds two 2-bit binary numbers: , where are binary inputs and are the two low-order output bits.

I ended up approaching it as a classic cascading adder:

- A half adder for the least significant bits (), producing sum bit and a carry

- A full adder for the most significant bits (), producing sum bit

The interesting part is that the sum output of each adder stage is an XOR, which a single M-P neuron can’t compute (it’s not linearly separable). So you need at least two neurons for each XOR. The carry is just an AND gate, which one neuron handles fine.

What I found satisfying about this problem is how it shows that neural networks and logic circuits are really doing the same thing at different levels of abstraction. You’re implementing AND, OR, and XOR gates with weighted sums and thresholds.

Problem 3: Linear Separability#

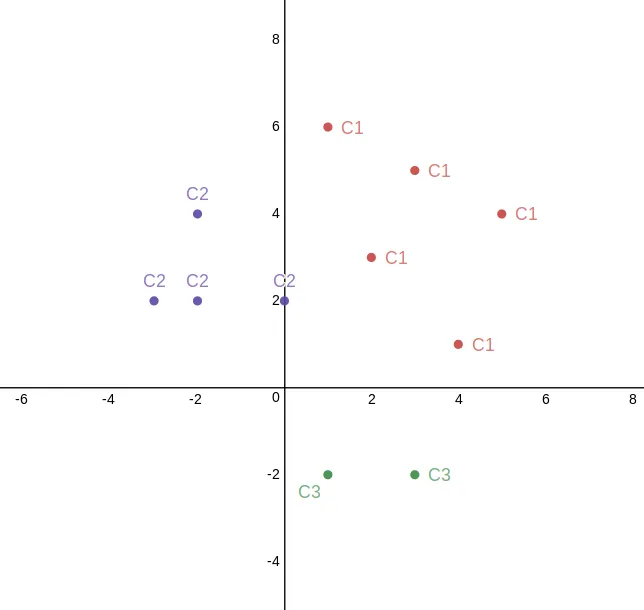

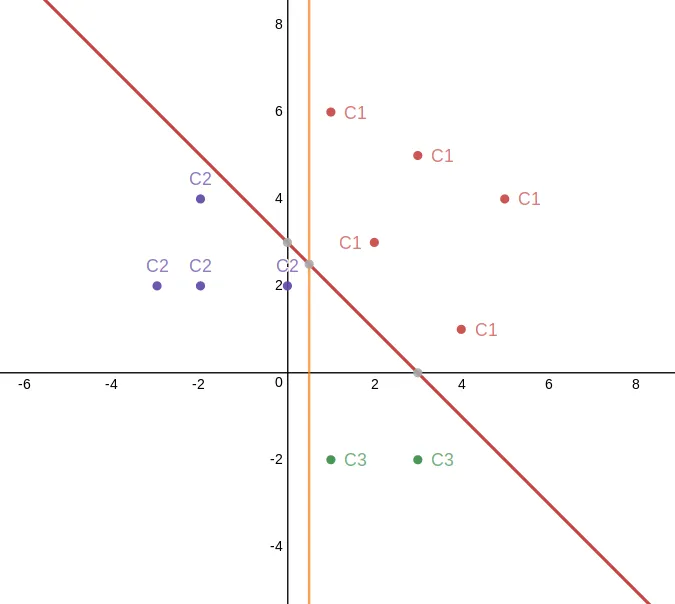

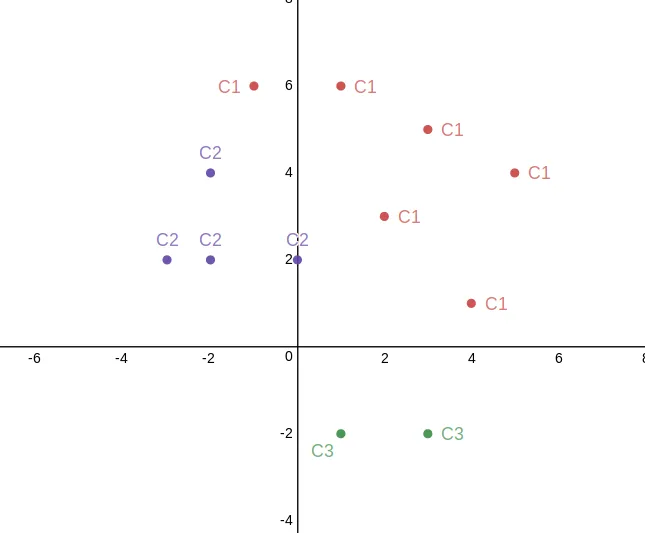

Given three classes of 2D points:

The question: can a single-layer perceptron with three outputs (one-vs-all) separate them?

Part (a): Yes. If you plot these points, you can draw two lines that separate all three classes. Each output neuron implements one linear boundary, so a single-layer perceptron with three output units works here.

Part (b): Add to , and now you’re stuck. This point sits too close to for any pair of linear boundaries to correctly classify everything with a one-vs-all strategy.

What I Took Away#

The main thing from this homework was getting comfortable with the M-P neuron model and seeing where it breaks down. A single neuron can only separate data with a hyperplane, so if your classes aren’t linearly separable, you’re out of luck with a single layer. The binary adder problem was a nice way to see that you can compose neurons into networks to get around that limitation for Boolean functions specifically, but the general case (like part (b) of problem 3) needs something more.